Hi👋 I am Zhouqi Hua (华洲琦).

I am a first year PhD student at Fudan University, in the joint program in Large Model Center of Shanghai AI Laboratory. I am fortunate to be advised by Dr. Wenwei Zhang, Dr. Kai Chen and Prof. Dahua Lin. Before that, I received the bachelor degree at Tongji University in 2025 (under the supervision of Prof. Yufei Chen and Prof. Zhangkai Ni).

My research focus on generalization in LLMs, including length generalization and compositional generalization. Now I'm interested in investigating the mathematical abilities of LLMs.

Warning

Problem: The current name of your GitHub Pages repository ("Solution: Please consider renaming the repository to "

http://".

However, if the current repository name is intended, you can ignore this message by removing "{% include widgets/debug_repo_name.html %}" in index.html.

Action required

Problem: The current root path of this site is "baseurl ("_config.yml.

Solution: Please set the

baseurl in _config.yml to "Education

-

Shanghai AI LabResearch Intern @ Large Model Center

Shanghai AI LabResearch Intern @ Large Model Center

Joint Ph.D. StudentSep. 2025 - present -

Fudan UniversityPh.D. Student in Computer ScienceSep. 2025 - present

Fudan UniversityPh.D. Student in Computer ScienceSep. 2025 - present -

Tongji UniversityB.S. in Computer ScienceSep. 2021 - Jul. 2025

Tongji UniversityB.S. in Computer ScienceSep. 2021 - Jul. 2025

Honors & Awards

-

🥇First Prize, National Intelligent Car Competition2024

-

🥈Second Prize, CCCC-MAIC (Hosted by Apple Inc.)2024

-

🥇Tongji Excellent Student Scholarship (First Prize)2024

-

🥇Tongji Excellent Student Scholarship (First Prize)2023

News

Selected Publications (view all )

Intern-S1: A Scientific Multimodal Foundation Model

Lei Bai, Zhongrui Cai, ..., Zhouqi Hua, ..., Yu Qiao et al.

Technical Report

Intern-S1 is a large multimodal MoE foundation model trained with massive scientific data and mixture-of-rewards reinforcement learning, achieving SOTA performance in scientific reasoning and professional tasks while remaining competitive in general reasoning among open-source models.

Intern-S1: A Scientific Multimodal Foundation Model

Lei Bai, Zhongrui Cai, ..., Zhouqi Hua, ..., Yu Qiao et al.

Technical Report

Intern-S1 is a large multimodal MoE foundation model trained with massive scientific data and mixture-of-rewards reinforcement learning, achieving SOTA performance in scientific reasoning and professional tasks while remaining competitive in general reasoning among open-source models.

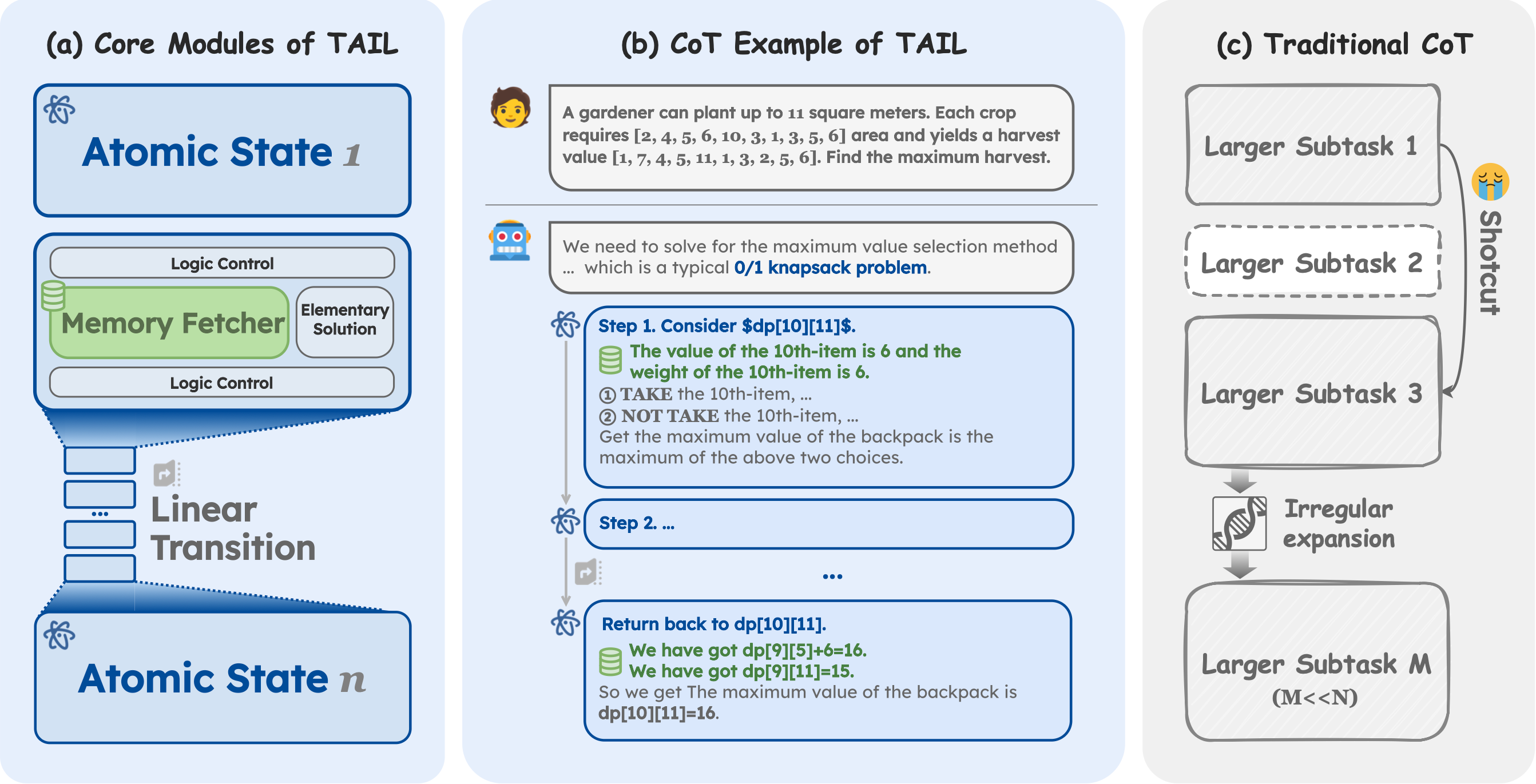

The Imitation Game: Turing Machine Imitator is Length Generalizable Reasoner

Zhouqi Hua, Wenwei Zhang, Chengqi Lyu, Yuzhe Gu, Songyang Gao, Kuikun Liu, Dahua Lin, Kai Chen

International Conference on Learning Representations ICLR 2026

Turing Machine Imitation Learning (TAIL) is a synthetic chain-of-thought framework that instills Turing machine–like execution in LLMs, enabling robust length generalization for computable reasoning. On 18 challenging tasks, a 7B TAIL model outperforms the 671B DeepSeek-R1, establishing a new state of the art.

The Imitation Game: Turing Machine Imitator is Length Generalizable Reasoner

Zhouqi Hua, Wenwei Zhang, Chengqi Lyu, Yuzhe Gu, Songyang Gao, Kuikun Liu, Dahua Lin, Kai Chen

International Conference on Learning Representations ICLR 2026

Turing Machine Imitation Learning (TAIL) is a synthetic chain-of-thought framework that instills Turing machine–like execution in LLMs, enabling robust length generalization for computable reasoning. On 18 challenging tasks, a 7B TAIL model outperforms the 671B DeepSeek-R1, establishing a new state of the art.