2026

Intern-S1-Pro: Scientific Multimodal Foundation Model at Trillion Scale

Yicheng Zou, Dongsheng Zhu, ..., Zhouqi Hua, ..., Lei Bai et al.

Technical Report

Intern-S1-Pro is a one-trillion-parameter scientific multimodal foundation model that enhances general and scientific capabilities through advanced agent functionalities and specialized task mastery across multiple scientific disciplines.

Intern-S1-Pro: Scientific Multimodal Foundation Model at Trillion Scale

Yicheng Zou, Dongsheng Zhu, ..., Zhouqi Hua, ..., Lei Bai et al.

Technical Report

Intern-S1-Pro is a one-trillion-parameter scientific multimodal foundation model that enhances general and scientific capabilities through advanced agent functionalities and specialized task mastery across multiple scientific disciplines.

2025

Intern-S1: A Scientific Multimodal Foundation Model

Lei Bai, Zhongrui Cai, ..., Zhouqi Hua, ..., Yu Qiao et al.

Technical Report

Intern-S1 is a large multimodal MoE foundation model trained with massive scientific data and mixture-of-rewards reinforcement learning, achieving SOTA performance in scientific reasoning and professional tasks while remaining competitive in general reasoning among open-source models.

Intern-S1: A Scientific Multimodal Foundation Model

Lei Bai, Zhongrui Cai, ..., Zhouqi Hua, ..., Yu Qiao et al.

Technical Report

Intern-S1 is a large multimodal MoE foundation model trained with massive scientific data and mixture-of-rewards reinforcement learning, achieving SOTA performance in scientific reasoning and professional tasks while remaining competitive in general reasoning among open-source models.

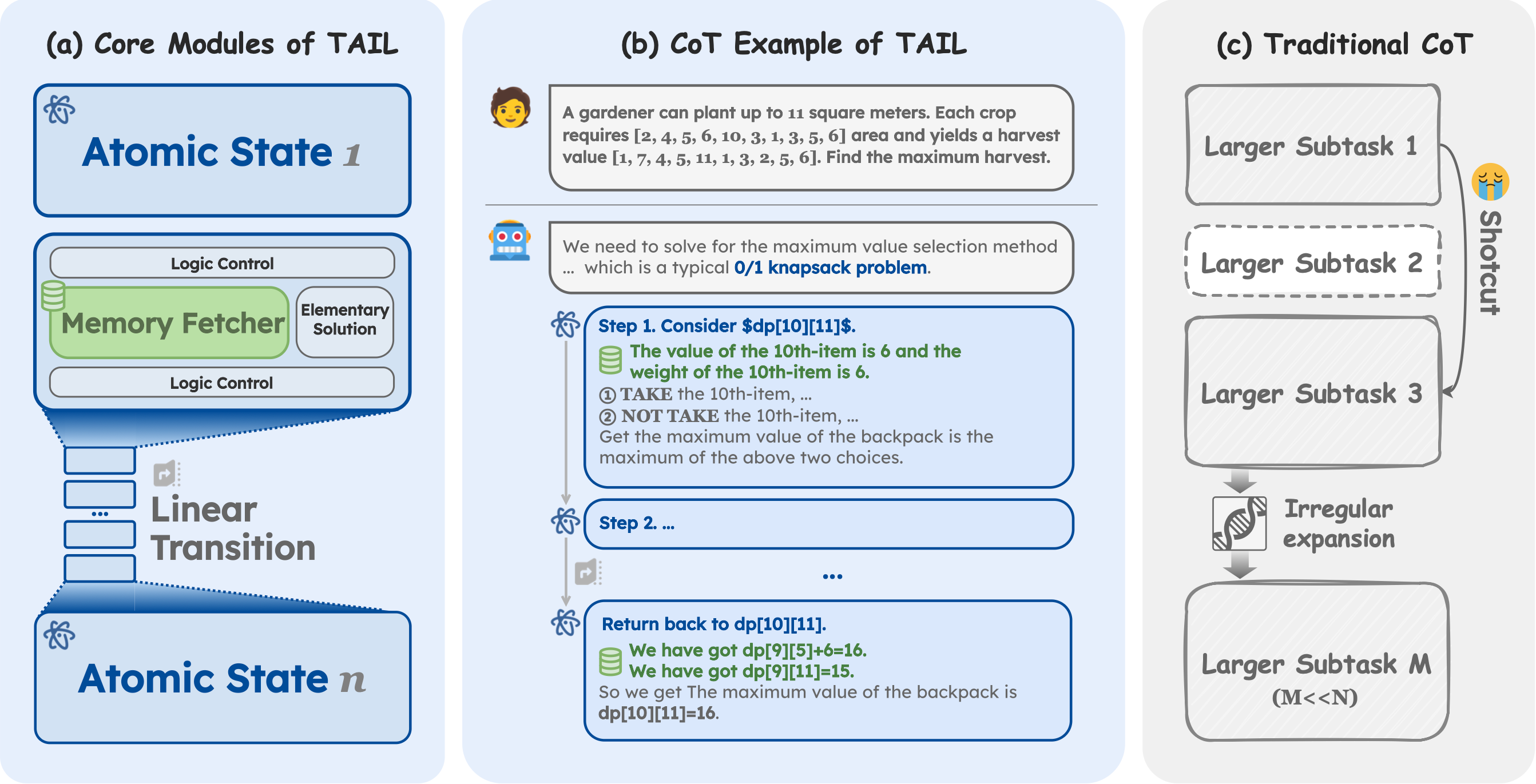

The Imitation Game: Turing Machine Imitator is Length Generalizable Reasoner

Zhouqi Hua, Wenwei Zhang, Chengqi Lyu, Yuzhe Gu, Songyang Gao, Kuikun Liu, Dahua Lin, Kai Chen

International Conference on Learning Representations ICLR 2026

Turing Machine Imitation Learning (TAIL) is a synthetic chain-of-thought framework that instills Turing machine–like execution in LLMs, enabling robust length generalization for computable reasoning. On 18 challenging tasks, a 7B TAIL model outperforms the 671B DeepSeek-R1, establishing a new state of the art.

The Imitation Game: Turing Machine Imitator is Length Generalizable Reasoner

Zhouqi Hua, Wenwei Zhang, Chengqi Lyu, Yuzhe Gu, Songyang Gao, Kuikun Liu, Dahua Lin, Kai Chen

International Conference on Learning Representations ICLR 2026

Turing Machine Imitation Learning (TAIL) is a synthetic chain-of-thought framework that instills Turing machine–like execution in LLMs, enabling robust length generalization for computable reasoning. On 18 challenging tasks, a 7B TAIL model outperforms the 671B DeepSeek-R1, establishing a new state of the art.